The Frustrations of Troubleshooting Telecommunications Link Errors in 2025

Exploring Common Challenges in Troubleshooting Telecommunications Link Errors in 2025

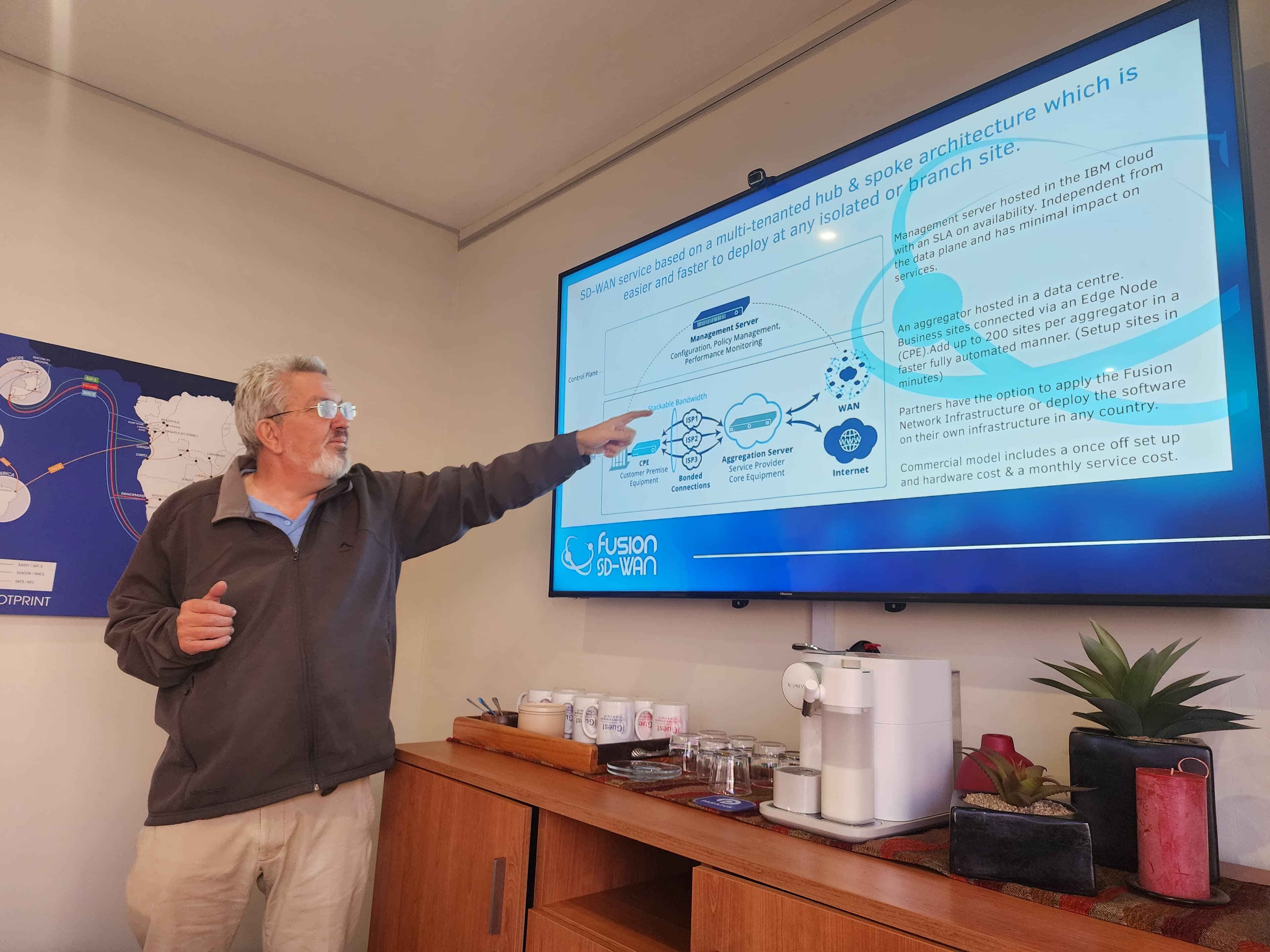

Driving SD-WAN Adoption in South Africa

In the fast-paced world of telecommunications, where businesses and individuals rely on seamless connectivity, encountering degraded service is not just an inconvenience—it’s a productivity killer. For network administrators and engineers tasked with maintaining these critical links, the process of troubleshooting performance issues can be maddening, especially when diagnostic tools and service provider responses fall short of expectations. A common frustration arises when querying error counters via Simple Network Management Protocol (SNMP) Management Information Base (MIB) walks, only to find that the error counts on either side of a telecommunications link differ, and the provided data—such as a bit rate graph—does little to pinpoint the issue. This article delves into why error counters differ across link endpoints, the complexities of multi-hop telecommunications paths, the limitations of troubleshooting tools like LLDP and ping, and the exasperation of dealing with outdated practices in 2025 that have persisted since the 1990s.

Why Error Counters Differ in SNMP MIB Walks

SNMP MIB walks, particularly querying objects like those in the IF-MIB (e.g., ifInErrors, ifOutErrors), are a standard method for monitoring interface performance in telecommunications equipment. However, a frequent observation is that error counters reported on either side of a link—say, between Device A and Device B—rarely match. This discrepancy is not a flaw in the system but a reflection of how errors are detected and reported.

Input Errors vs. Output Errors

Input Errors (ifInErrors): These counters track errors in packets or frames received by an interface. For a link connecting Device A and Device B:

Device A’s ifInErrors counts issues in transmissions from Device B to Device A, such as Cyclic Redundancy Check (CRC) errors, framing errors, runts, or giants caused by signal degradation, noise, or protocol mismatches.

Device B’s ifInErrors counts errors in the opposite direction, from Device A to Device B.

Since each direction of a link (transmit and receive) operates independently, errors are often asymmetric. For example, in fiber optic links, separate fibers handle TX (transmit) and RX (receive), so a bent or dirty fiber may degrade one direction without affecting the other.

Output Errors (ifOutErrors): These counters reflect issues encountered during packet transmission from an interface, such as underruns, late collisions (in half-duplex Ethernet), or carrier sense problems. These errors are local to the transmitting device and do not directly correlate with what the receiving device reports. For instance, Device A’s ifOutErrors are unrelated to Device B’s ifInErrors, though they may contribute to downstream issues.

Factors Contributing to Error Discrepancies

Several factors explain why error counts differ across link endpoints:

Physical Layer Asymmetry: Many telecommunications links, especially optical ones, use separate physical paths for TX and RX. Issues like signal attenuation, connector contamination, or fiber bends can affect one direction more severely, leading to higher error counts on the receiving end of the degraded path.

Timing and Sampling Issues: SNMP polls capture snapshots of counters, which increment independently on each device. If queries are not perfectly synchronized or if traffic bursts occur between polls, the reported counts may appear misaligned.

Protocol-Specific Counters: In telecom protocols like SONET/SDH or DS3, MIBs (e.g., SONET-MIB) include near-end (local) and far-end (remote-reported) error counters. Far-end errors, signaled via overhead bytes, reflect issues detected by the remote device, which may not align with local observations due to directional differences or equipment sensitivity.

Configuration Mismatches: Mismatched settings, such as speed, duplex, or clocking, can cause one side to detect more errors. For example, a duplex mismatch may lead to excessive collisions on one end, inflating ifOutErrors, while the other end sees ifInErrors due to corrupted frames.

User Frustration | Misleading Responses from Providers

When a user reports degraded service and requests specific diagnostic data, such as a screenshot of an SNMP MIB walk showing error counters on both sides of a link, the response is often inadequate. A common grievance is receiving a bit rate graph instead, which shows throughput but reveals nothing about packet errors, discards, or specific interface issues. This mismatch between the requested data and the provided output is infuriating, as it fails to address the root cause of the degradation. For example:

A bit rate graph might show stable throughput, masking intermittent errors that disrupt application performance.

Without error counter data, users cannot determine whether the issue lies in the physical layer, configuration, or elsewhere.

This scenario highlights a broader issue: service providers or support teams often rely on generic, high-level metrics rather than diving into the granular, interface-specific data that SNMP MIBs provide. For a user trying to troubleshoot a critical link, this feels like a dismissive brush-off, exacerbating their frustration.

The Complexity of Multi-Hop Telecommunications Paths

Modern telecommunications links rarely consist of a single point-to-point connection. Instead, they often span multiple hops—sometimes a dozen or more interfaces—across routers, switches, or optical transport equipment. Each hop represents a separate error domain, meaning errors are detected and counted independently at each interface. This complexity makes troubleshooting a degraded path akin to finding a needle in a haystack.

Error Domains in Multi-Hop Paths

Each hop in a telecommunications path introduces its own set of error counters:

Physical Layer Errors: CRC errors, framing errors, or signal loss at each interface.

Data Link Layer Errors: Collisions, discards due to congestion, or VLAN mismatches.

Network Layer Issues: Routing loops or packet drops that may not increment interface error counters but still degrade service.

Because errors are local to each interface, a single degraded hop can cause downstream issues that are difficult to trace. For example, a fiber issue between Hop 3 and Hop 4 may cause high ifInErrors on Hop 4’s receiving interface, but without visibility into all hops, pinpointing the exact location is challenging.

Troubleshooting Tools | LLDP, Ping, and MAC Tables

To trace a multi-hop path, network administrators often rely on a combination of tools, each with its own limitations:

Link Layer Discovery Protocol (LLDP): LLDP (or its Cisco equivalent, CDP) allows devices to advertise their identity and connectivity to neighbors. By querying LLDP tables (via MIBs like LLDP-MIB), users can map the topology of a link hop by hop. However:

Not all devices support LLDP, especially older or non-standard equipment.

LLDP data may be incomplete if devices are misconfigured or if the protocol is disabled.

In multi-vendor environments, interoperability issues can lead to missing or inconsistent LLDP data.

Ping: Ping is a simple tool to verify connectivity and measure latency, but it’s a blunt instrument. It can confirm that a path is up but provides no insight into specific interface errors or the physical topology. If a link is degraded but not completely down, ping may show normal results despite underlying issues.

MAC Address Tables: MAC tables (accessible via SNMP or CLI) can help trace the path of frames through switches, but they are notoriously unreliable for troubleshooting complex paths:

MAC tables are dynamic and age out quickly, making them hard to capture consistently.

In large networks with VLANs or trunking, MAC tables may not clearly indicate the physical path.

Accessing MAC tables often requires manual CLI interaction, which is time-consuming and error-prone compared to automated SNMP queries.

As one frustrated user put it, “MAC tables suck.” These tools, while useful, often feel like patchwork solutions for a problem that should have more robust, standardized approaches in 2025.

The Persistent Pain of Outdated Practices

Perhaps the most galling aspect of troubleshooting telecommunications issues in 2025 is the persistence of practices that were already problematic in 1995. Despite three decades of advancements in networking technology, many service providers and network management systems still rely on outdated or inadequate methods:

Lack of Granular Diagnostics: As noted, receiving a bit rate graph instead of error counter data is a common issue. This reflects a failure to prioritize detailed, interface-level telemetry, which SNMP was designed to provide.

Manual Troubleshooting: Tools like LLDP, ping, and MAC tables require significant manual effort to correlate data across multiple devices. Modern networks should offer automated path tracing and error localization, yet these capabilities remain rare.

Inconsistent Standards: While SNMP and LLDP are standardized, their implementation varies across vendors, leading to gaps in data or compatibility issues. This problem was well-known in the 1990s and persists today.

Reactive Support: Service providers often respond reactively, sending generic reports rather than proactively analyzing error counters or path-specific issues. This leaves users to do the heavy lifting of diagnosis.

The user’s exasperation—“It’s 2025 and they’re still not doing things that were known about in 1995 🤬”—captures the sentiment of being stuck in a technological time warp. In an era of AI-driven analytics and software-defined networking, the reliance on manual, fragmented troubleshooting feels inexcusable.

Strategies for Effective Troubleshooting

Despite these challenges, network administrators can take steps to improve their ability to diagnose and resolve link degradation:

Request Specific SNMP Data: When engaging with a service provider, explicitly request SNMP MIB walk data for ifInErrors, ifOutErrors, and protocol-specific counters (e.g., SONET-MIB for optical links). Provide the exact OIDs (e.g., 1.3.6.1.2.1.2.2.1.14 for ifInErrors) to avoid miscommunication.

Correlate Directional Counters: Compare ifOutErrors on one device with ifInErrors on the receiving device to identify directional issues. For example, if Device A’s ifOutErrors are high and Device B’s ifInErrors are also elevated, the issue likely lies in the TX path from A to B.

Use LLDP for Path Mapping: Despite its limitations, LLDP is the best tool for tracing multi-hop paths. Query LLDP tables (lldpRemTable in LLDP-MIB) to build a topology map, and cross-reference with physical documentation if available.

Automate Where Possible: Use network management tools to automate SNMP polling and correlate error data across devices. Tools like Zabbix, Nagios, or custom scripts can reduce manual effort and provide real-time alerts for error spikes.

Test the Physical Layer: If error counters suggest a directional issue, test the physical media (e.g., fiber cleaning, cable replacement, or optical power measurements) to rule out hardware faults.

Push for Better Support: Advocate for providers to deliver detailed, relevant diagnostics rather than generic graphs. Escalate issues to technical teams if initial responses are inadequate.

Wrap | Troubleshooting Degraded Telecommunications Links

Troubleshooting degraded telecommunications links is a complex and often frustrating task, compounded by the inherent asymmetry of error counters, the challenges of multi-hop paths, and the limitations of tools like LLDP, ping, and MAC tables. The user’s experience—requesting specific SNMP MIB walk data and receiving a useless bit rate graph—underscores a broader issue: the gap between user expectations and provider support in 2025. While SNMP and related protocols offer powerful diagnostic capabilities, their effectiveness is diminished by outdated practices, inconsistent implementations, and a lack of proactive support. By understanding why error counters differ, leveraging available tools strategically, and demanding better diagnostics, network administrators can navigate these challenges more effectively. However, the industry as a whole must move beyond 1990s-era approaches to deliver the automated, granular telemetry that modern networks demand. Until then, the frustration of users grappling with degraded service will remain a persistent reality.